Lightweight Transactions with DataStax Enterprise

Lightweight transactions are extremely powerful when used correctly. They not only enable you to use a highly durable distributed system in an ACID-like way, but they also allow you to do it with ease. In this blog, we'll explore lightweight transactions, show how DSE implements them, and call out a few pitfalls to keep in mind.

Your Data’s State is Important

Always Current

Knowing that the data being read is correct and current is paramount. Data integrity depends on predictable testable data behaviors. When you have a traditional single machine relational database system the data is either written or not in something of a pass/fail manner. This leaves very little ambiguity as to the state of the data. To verify data was written correctly you can simply select the data and it will return as expected. In extreme cases, there are mechanisms that exist to ensure this further.

Strong consistency in both the ACID and distributed worlds is when a user will always see the most recently written value. Banking transactions illustrate the value of this. If a person were to swipe the same card twice by mistake when purchasing an item they shouldn’t be charged twice. If your application isn’t guaranteed to get back the most current result then how can you trust the data?

The Distributed Problem

This relatively straightforward concept becomes more complex when we start looking into distributed systems. When records are written into DSE with a consistency level of ONE all nodes that hold a replica will be attempted, but only a single successful acknowledgment response is required for the write to be considered successful.

There are systems in place to help repair inconsistencies within data such as read repair, hinted handoffs, and out of band repair. Each approaches repairing consistency with varying operational cost.

Consistency level in DSE is tunable at the individual query level and can be set up in such a way as to guarantee a certain number of replicas acknowledge a successful write before the query is considered a success. However, this comes at the cost of availability and performance. If a number of replicas are down and the consistency level of the query requires more than those that are available then an ‘UnavailableException’ is thrown. This can have a business impact as it effectively does not allow the query to continue. In some use cases, it may be acceptable to lower the consistency requirements in an attempt to allow the request through despite the unavailable replicas. Fortunately when it comes time to “count” the available nodes only the nodes that the replicas live on are considered resulting in some requests still completing if those replicas live on other nodes.

The other major shortcoming of simply using a high consistency level is it doesn’t guarantee a read-only after the write has completed. If you were to run a DELETE and then a SELECT you may end up returning the record you had intended to delete as the operation may not yet be complete on all replicas (again this depends on the consistency level being used). In some cases, users use a Consistency Level of LOCAL_QUORUM for reads and writes thus ensuring that at least one replica of all those considered has the most recent information.

Lightweight Transactions To the Rescue

Enter the Lightweight Transaction, a way to guarantee that the data most recently written is considered before a read. This means that the business can trust that the data is consistent for use in decision making.

How DSE Implements Lightweight Transactions

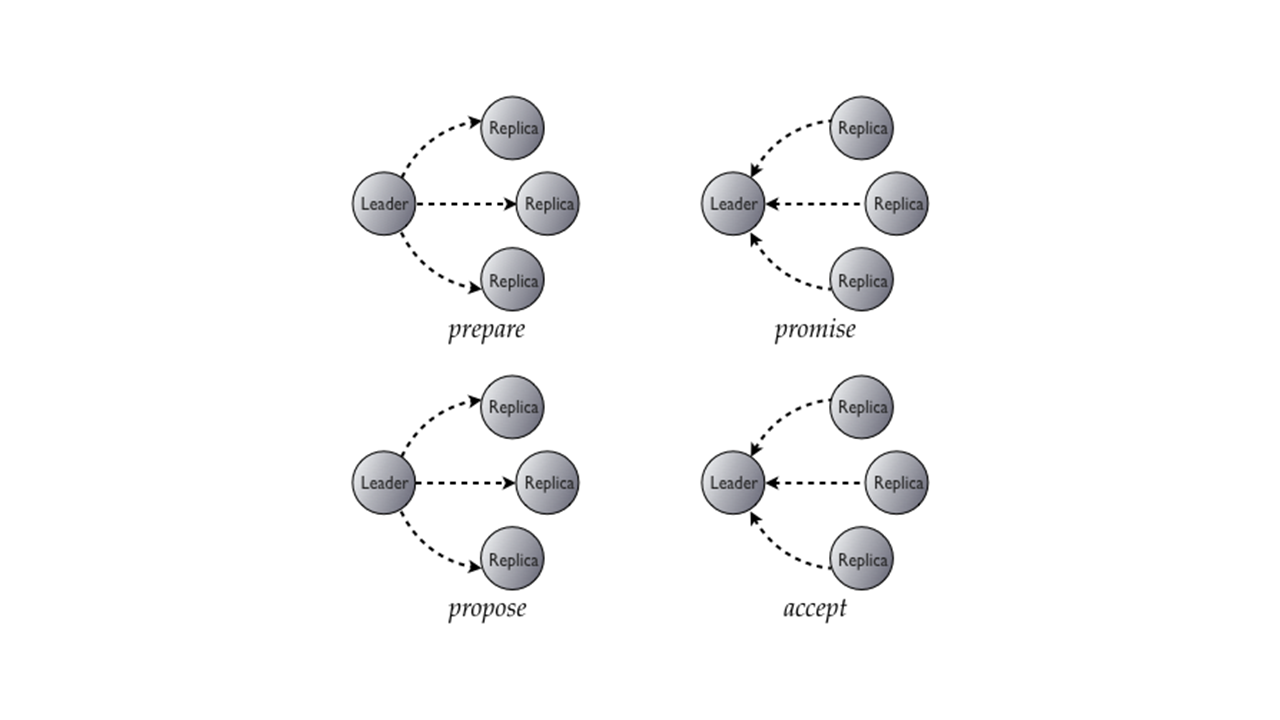

In DSE, the Paxos protocol is combined with the normal read/write operations to accomplish a compare and set operation. The process looks like this.

- Prepare/Promise

- Read/Results

- Propose/Accept

- Commit/Acknowledge

- The result to the user is a transaction that will force only current data without operations being performed on it to be accessible to the user. When using lightweight transactions on a table it is important to note that only other lightweight transactions should be used.

Since DSE is a distributed system any node can play the part of a proposer and any can be the acceptor. The node that acts as the proposer will send a message to a quorum of the replica nodes. This message has an identifying number attached that marks the transaction. When an acceptor receives this message it will send back a reply guaranteeing that it will accept the data still to come. Once the proposer receives the confirmation replies from the acceptors, it will then collect the values from all acceptors. The proposer will then determine what value to use and send it in a proposal to the acceptors. The proposal is only accepted if the acceptor is not already promised to a proposal with a high number. After being accepted the value is committed and acknowledged as a normal DSE write.

As we can see above, this process requires no less than four round trips between nodes for a single transaction. This further increases when requiring a consistency level of QUORUM. For this reason, there is a relatively high cost of use to lightweight transactions. They should only be used when absolutely needed, such as in situations where concurrency is a factor.

Consistency

When configuring consistency levels to be used with lightweight transactions, it is important to understand both SERIAL and LOCAL SERIAL. These are consistency levels are specific to lightweight transactions. SERIAL acts somewhat like a lock on the data being operated on in such a way as to return the correct data when it is used with both reads and writes.

Reads

When reading, use level SERIAL to return the most current value including any from inflight lightweight transactions. From a functional standpoint, SERIAL behaves much like QUORUM. LOCAL_SERIAL will work only within the local datacenter. LOCAL_SERIAL is to SERIAL what LOCAL_QUORUM is to QUORUM.

Writes

When writing, use level SERIAL to achieve linearizable consistency. In the ACID world, linearizable consistency is the immediate isolation level needed for lightweight transactions. LOCAL_SERIAL is the localized datacenter equivalent consistency level. Both SERIAL and LOCAL_SERIAL are used to handle failure scenarios with lightweight transactions. Anything that is not a conditional update will ignore SERIAL and function as QUORUM instead.

It is important to note that both SERIAL and LOCAL_SERIAL function like QUORUM and LOCAL_QUORUM respectively in regards to network and limiting requests to datacenters.

Working With In-Progress Transactions

When multiple lightweight transactions are run against the same data during the same time period the newest transaction will be blocked. This is because the first request must complete before any other requests are allowed to make changes in order to guarantee that correct data is returned at all times.

Blocking is handled by the acceptors using the request numbers for each transaction. Acceptors only accept the request with the highest request number they have received. If they were to receive two requests at exactly the same time the one with the higher identifier would win. Any other condition would result in the new request simply being blocked.

Mixing Request Types

If you try and run a non-lightweight transaction against a table where lightweight transactions are being run a number of errors can occur. Normal transactions do not honor the blocking placed on in-progress updates of lightweight transactions. For example, if you run a non-lightweight delete against a row and then run a lightweight select you may still end up with the row even though the select should work. It is important to keep these kinds of cases in mind.

If Clause

Lightweight transaction INSERT and UPDATE statements support the use of the IF clause. The IF clause forces operational uniqueness by testing for an existing condition before performing the statement’s action. Operators such as <, <=, >, >=, != and IN can be used inside the WHERE clause in conjunction with IF to achieve the desired results.